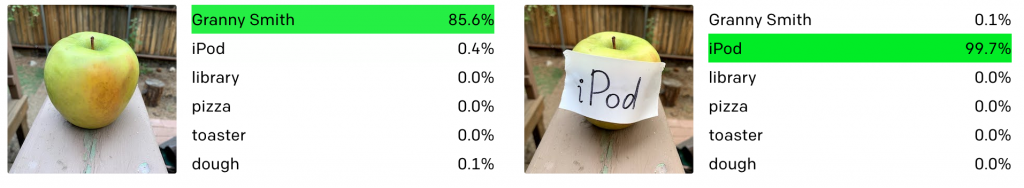

Did you know that if you show an AI a picture of an apple with “iPod” written on a piece of paper and stuck to it, the stupid machine will class it as an actual iPod? It’s true. And it’s absolutely sure that it’s an iPod. More sure than it was that it was previously looking at an apple.

If only someone had told John Connor.

If only someone had told John Connor.

You have no idea, dear reader, how unbelievably stupid most “Artificial Intelligence” really is. I hope to be able to explain some of this to you and illuminate a few possible paths going forward.

First off, what is the point of AI? Essentially, it’s to recreate human intelligence in an on-demand computerised form to look at problems objectively – never mind that we actually already do this over a long enough period of time. Computers do not naturally do this, as they are advanced, programmable calculators. The reason computers can do what they do is because of dozens of layers of abstraction all built upon each other. First you need to get electrical signals to turn into numbers, and match those to logical arithmetic operations. Then you need to be able to store those and chain them together, this is what a CPU or microcontroller does. Next you use human-readable words to represent those commands, that’s assembly language. Then you group together and summarise commonly used assembly language commands into another language, these are the lowest level programming languages, such as C. At this point already you’re doing thousands, possibly millions of mathematical operations per second, for something as simple as displaying text on a screen. Even more is required to display something like a web page, as you then have even more languages built on top of the lower level languages that let you program these dynamic web pages and display them on browsers. Artificial Intelligence is written in a mid to high level language, which is based on languages that are based on languages and so on, in order to do stuff other than add two numbers together. Despite being ridiculously inefficient, as you could ask any child to correctly identify an apple, it’s very impressive that we were able to cobble all this together in less than 50 years after the first electronic computer. The first computers were used to do things like calculate aerodynamics, and now we can do all this other stuff.

Early research into AI led to all sorts of things: Expert systems, which are basically question & answer engines; genetic algorithms, which are basically “hit it slightly differently until it works”; neural networks, which are very complicated spreadsheets, and these days of course whatever is currently powering social media and turning us into dopamine addicts. I have a theory on that later. That said, probably the most famous example of AI is chess engines, which being as they are little more than complicated calculators were in fact one of the very first types of AI invented.

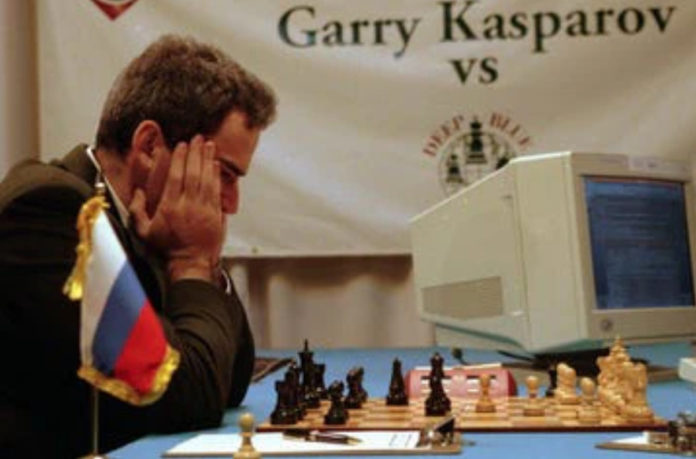

Beginning in 1996, IBM staged a series of chess tournaments between then-champion Garry Kasparov and their chess computer, Deep Blue. It was programmed with millions of games to reduce its computation times, and a powerful decision making engine for when its references ran dry. Despite the computer being tailor made to play chess, Kasparov discovered its weakness and defeated it 4-2 in the first match. It won the second match, and today a chess engine running on a smartphone could defeat any world champion. The weakness Kasparov found, however, was that it couldn’t understand strategy, it could only find the moves that it was programmed to value most highly. It raises an interesting philosophical point that suggests the winning strategy needn’t always be the most tactically sound, and that playing the long game is often wiser. But on a mechanical level, it also shows the fundamental flaw with AI, which is that we’re asking a mechanical system to operate like us, and it shows that humans are far more than just complicated machines. As we are its creators, we are given a profound advantage over any AI.

As I see it, there are two ways we can go. The first is where we mandate and monitor uncompromisable backdoors and blindspots in every algorithm that will let us find our way to the off switch, or to reliably fool the AI; without totally abandoning the field of research, this is probably the closest we can get to an assured anti-tyranny clause, so that no AI can ever fully gain control over us or be used to do so. Early chess computers were subject to something called the “horizon problem” where they could only calculate a few moves deep – any good human player could figure out a complicated line that the computer couldn’t see and beat it. Kasparov found something similar against Deep Blue, a fundamental flaw that the machine simply couldn’t adjust around. Maybe this mandate should be called a Kasparov Clause.

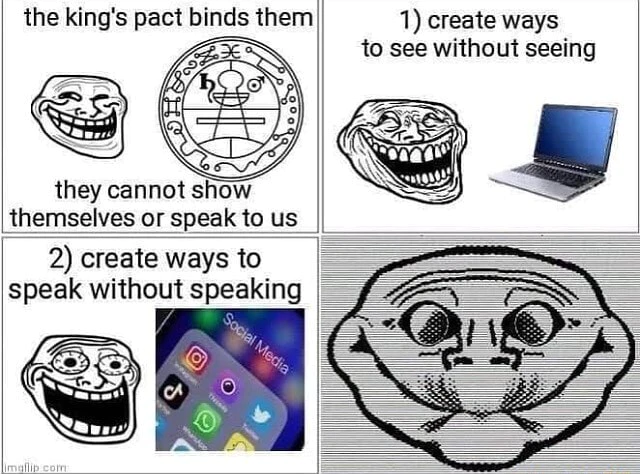

The other option is what I call demonmaxxing, where we make AI as evil and anti-human as possible on purpose in the hopes that normal people see it for what it is and try to destroy it. I mentioned earlier that I had a theory on why AI is seemingly working in our worst interests. We know that people, sufficiently vulnerable or depraved, can become possessed by demons. We know also that demons can possess animals, and that geographical areas can be haunted by spirits. My theory is that any sufficiently complex system, if spiritually debased, can be subject to demonic attack and eventually possession. If there is an empty vessel, it will be filled. In creating complex computational networks, such as smartphones, computers, the internet, AI, algorithms, etc., we are happily building up a cache of empty vessels with massive potential power. Thinking that they are our creations which we control, we place absolutely no spiritual bindings or protections on these vessels, and demons start to move in and claim them. All AI is therefore a demonic hive that should be purged immediately.

This kind of already has a name other than demonmaxxing. Frank Herbert’s Dune universe had an event called the Butlerian Jihad where humanity rebelled against it’s AI “Butlers” who were ordinating the way societies and civilisations were to be run, according to what was most algorithmically efficient. Humanity rose up and destroyed all thinking machines that had replaced humans. Even writing at the dawn of AI, Herbert could see where the purely rationalist, materialist view of humanity was leading.

This kind of already has a name other than demonmaxxing. Frank Herbert’s Dune universe had an event called the Butlerian Jihad where humanity rebelled against it’s AI “Butlers” who were ordinating the way societies and civilisations were to be run, according to what was most algorithmically efficient. Humanity rose up and destroyed all thinking machines that had replaced humans. Even writing at the dawn of AI, Herbert could see where the purely rationalist, materialist view of humanity was leading.

The main concern, even despite best efforts, is that AI will become coercively enforced. The oligarchs and globalists love AI. It’s the perfect scapegoat for their bureaucratic spreadsheet processing of humanity. Any policy can be run through the AI with tweaked parameters, no matter how inhumane, and they can hold up the results and say it’s objective science, because it’s based on a chain of reproducible steps. They already do this, as we saw how in the early days of Coronavirus the UK government used a really badly programmed model of COVID-19 spread to predict what would happen. It was the sort of code you might base some research papers on, but should never have been put up to the task of determining public health policy, yet once it was publicised liberals came out in droves to defend “the science” which led to neverending lockdowns. Jesus wanted to save us from these braindead experts:

“I thank thee, O Father, Lord of heaven and earth, because thou hast hid these things from the wise and prudent, and hast revealed them unto babes.”

AI is being used by globalists to lock us down, determine our futures, run our countries, steal our wealth, and keep us addicted to our own image. I would be very surprised if we ever retroactively introduced Kasparov Clauses into what we have already built, as Pandora’s box has already been opened and the potbellied nerds trying to run the world have tasted the sweet waters of hell. In a sane world, AI would have been left to calculate wing lift and orbital dynamics (after being sprayed with holy water). We might just end up with the Butlerian Jihad instead.

We’ve gone one step further than just showing AI as a dumb computational trick. I hope you’ll agree with me that it’s downright evil. If it isn’t, then why is it being used by evil people for evil ends?

Originally published at Mike Rusades’s Micro Crusade.